multi-clone.py minimal user permissions

Half a year ago, I posted about a Python script I created using PySphere, called multi-clone.py. I used this script to quickly deploy multiple vm’s from the same template and do some post-processing. This allowed me to easily setup a lab environment to test any sort of cluster tool, configuration management tool, …

This tool has been picked up by some other people. I’m happy to see my work is useful to others. This also meant that I got the occasional question about it.

Last week someone came to me with an issue, he got strange error messages. At first I thought it might have been a version mismatch as the original script was developed using PySphere 0.1.7, and he was using PySphere 0.1.8. After a quick update on my end and testing it with PySphere 0.1.8, everything worked fine. I had the same vSphere version, the same PySphere version and I did the same command as he did. Sadly, I couldn’t reproduce the error.

At this point, all I could think of was a permissions error. So we tested if the user could create a template with the exact same information, using the web-client. It seemed he couldn’t.

All this got me thinking about the minimal security permissions a user needed to run my script in a vSphere environment. So I tested a few permission setups and came up with a minimal permissions list. I added this to the Github repository readme file, but decided to post it here as well.

All permissions are only necessary on their appropriate item. For instance: datastore permissions are only necessary for the datastores on which the template and VMs will be located (or cluster if a Storage DRS cluster), so you can limit access to only a certain set of datastores.

Minimal permissions necessary to run multi-clone.py and all it’s features

- Datastore

- Allocate space

- Network

- Assign Network

- Resource

- Apply recommendation

- Assign virtual machine to resource pool

- Scheduled task

- Create tasks

- Run task

- Virtual Machine

- Configuration

- Add new disk

- Interaction

- Power on

- Inventory

- Create from existing

- Provisioning

- Clone virtual machine (*)

- Deploy from template

- Configuration

(*) This is in case you want to use the script to clone an actual VM instead of a VM template

vCenter 5.5 Server Appliance quirks

Last week I upgraded my whole vSphere 5.1 environment to 5.5 and migrated to the vCenter 5.5 Server Appliance (VSA). Overall, I’m happy with this migration as the appliance gives me everything i need and the new web client works amazingly well, both with Mac OS X and Windows.

But there are a few quirks and small issues with it. Nothing to serious, and as i understand it, the VMware engineers are looking into it, but for those who are experiencing these issues, I wanted to provide a bit of explanation on how to fix them.

Quick stats on hostname is not up to date

The first issue I noticed, was a message that kept appearing in the web client when I was looking at the summary of my hosts. At first I thought that there was a DNS or connection issue, but i was capable of managing my hosts, so that was all good.

Starting to investigate the issue on internet, I noticed a few people reporting this issue, and apparently VMware already posted a KB article (KB 2061008) on it.

Let’s go to the simple steps on how to fix this on the VSA:

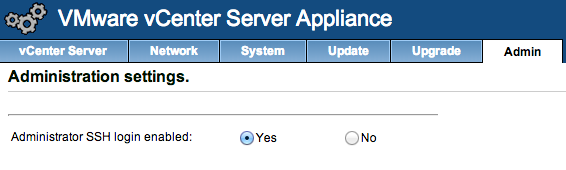

- Make sure SSH is enabled in your VSA admin panel:

- SSH to the VSA with user root and use the root password from the admin panel

- Copy the /etc/vmware-vpx/vpxd.cfg file to a save location, you will keep this as a backup

- Open the /etc/vmware-vpx/vpxd.cfg file with an editor

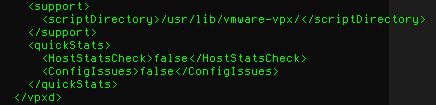

- Locate the </vpxd> tag

- Add the following text above that tag:

1234<quickStats><HostStatsCheck>false</HostStatsCheck><ConfigIssues>false</ConfigIssues></quickStats> - It should more or less look like this:

- Save the file

- Restart your VSA, the easiest way is just to reboot it using the admin panel, or using the reboot command.

If you ever update the VSA, check the release notes, if this bug is fixed, you might want to remove these config values again.

Unable to connect to HTML5 VM Console

After a reboot of my VSA, I was unable to open the HTML5 VM Console from the web client. I got “Could not connect to x.x.x.x:7331”, the service seemed down. VMware is aware of this issue and a KB article (KB 2060604) is available.

The cause of this issue is a missing environment variable (VMWARE_JAVA_HOME). To make VSA aware of this variable, you can follow these steps:

- Make sure SSH is enabled in your VSA admin panel (see screenshot in step 1 of the issue above)

- SSH to the VSA with user root and the root password from the admin panel

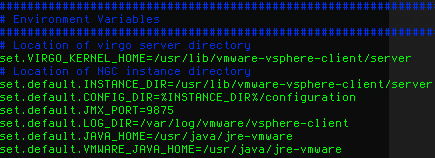

- Open the /usr/lib/vmware-vsphere-client/server/wrapper/conf/wrapper.conf file with an editor

- Locate the Environment Variables part

- Add the following text to the list of environment variables:

1set.default.VMWARE_JAVA_HOME=/usr/java/jre-vmware - It should look more or less like this:

- Save the file

- Restart the vSphere Web client using:

1/etc/init.d/vsphere-client restart

That should fix the issue and the HTML5 VM Console should work fine!

Migrate vCenter 5.1 Standard to vCenter 5.5 Server Appliance with Distributed vSwitch and Update Manager

At VMworld San Fransisco, VMware announced vSphere 5.5 and they officially released it a couple of days ago. With this new version of vSphere, the vCenter Server Appliance has been updated as well.

With this new version, the maximums have been increased. The vCenter Server Appliance was only usable in small environments with a maximum of 5 hosts and a 50 VM’s with the internal database. If you had more hosts and/or VMs, you had to connect your vCenter to an Oracle database. (Thanks Bert for noting this)

As of version 5.5, these limitations have been changed to a 100 hosts and 3000 VMs. With this change, vCenter Server Appliance becomes a viable alternative to a full fledged install on a Windows Server.

Until now I have always used vCenter as a full fledged install on Windows Server, with an SQL Server in my home lab. I used this setup to get a feel for running, maintaining and upgrading vCenter and all it’s components, while using multiple windows servers in a test domain. But with this new release, I’ve decided to migrate to the appliance and do a semi-fresh install.

I say semi-fresh, as I will migrate a few settings to this new vCenter server. Most settings will be handled manually or through the hosts, but the Distributed vSwitch are a bit more complicated. So I wanted to write down the steps I used to migrate from my standard setup to the appliance.

1. Export DvSwitch

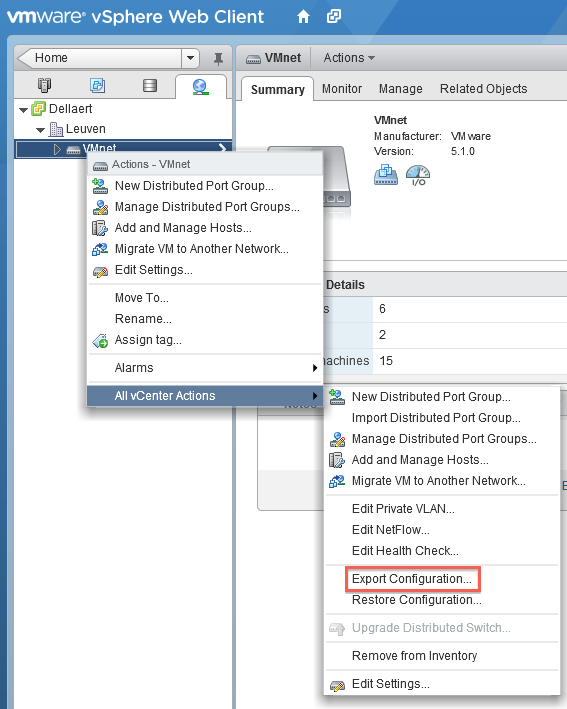

You can export your DvSwitch using the web client with a few easy steps.

Go to the Distributed vSwitch you want to migrate and right click it, go to All vCenter actions and select Export Configuration. Make sure you export all port groups and save the file to a convenient location.

2. Create a cluster in the new vCenter Server Appliance

Make sure the cluster has the same settings as the one in the old vCenter server. Focus on the EVC settings, the rest can be as you choose, but this is rather important if you are migrating live hosts and VMs.

3. Disable High Availability on the cluster

As you need to move hosts away from the cluster, you will have to disable the High Availability on it.

4. Disconnect the hosts from the old vCenter server and connect them to the new vCenter Server Appliance

At this point, you need to disconnect the hosts from the old vCenter server and connect them to the new vCenter Server Appliance. This might take a while, so be patient and watch the progress.

Your hosts might show a warning indicating an issue, but this can be safely ignored as it will be solved after the import of the Distributed vSwitch

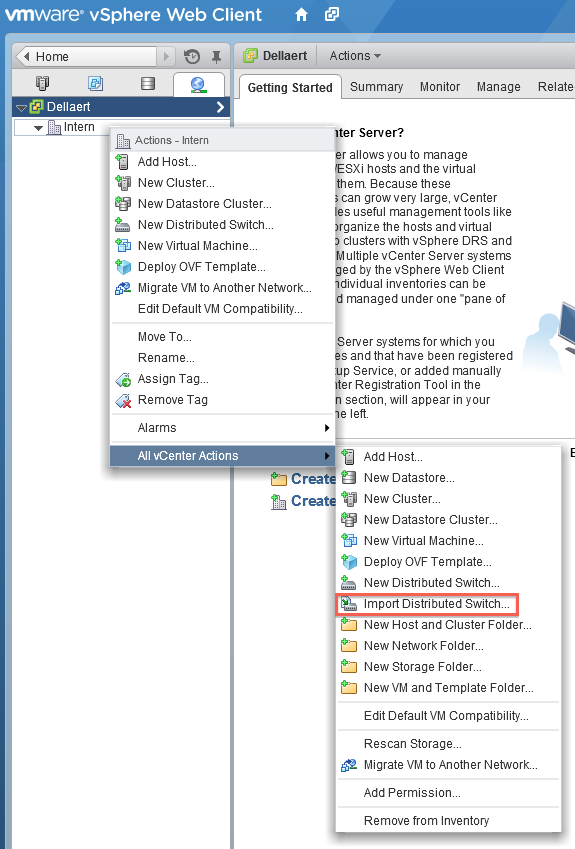

5. Import the Distributed vSwitch into the new vCenter Appliance Server

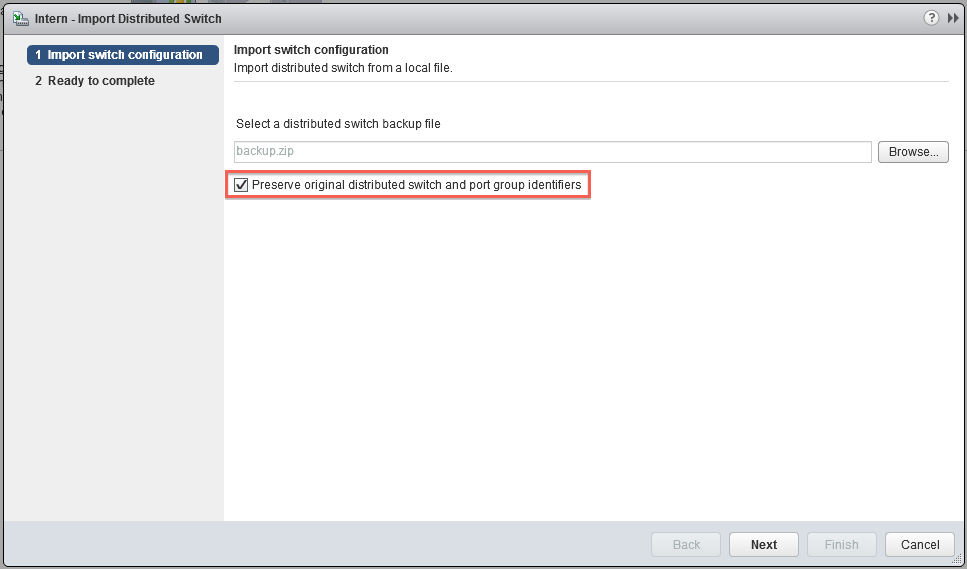

Go to the network tab and right click on the cluster, go to All vCenter actions and select Import Distributed Switch.

Make sure you select the ‘Preserve original distributed switch and port group identifiers’.

Give it a bit of time and your hosts will recognise the switch, and everything will be synced and connected again.

6. Update manager

There is one small issue with the great vCenter Server Appliance, it does lack an Update Manager in it’s regular setup. Luckily, you can connect a standard Update Manager install to the vCenter Server Appliance. I would suggest you just follow the standard guide. This one is still for vSphere 5.1, but the 5.5 version hasn’t changed much, so it should be pretty straightforward.

*update* Added extra information on the limitation of vCenter Server Appliance 5.1 (Oracle DB possibility)

PySphere script to clone a template into multiple VMs with post processing

*There is a better/newer version of this script, for more details, check this post: https://dellaert.org/2014/02/03/multi-clone-py-multi-threaded-cloning-of-a-template-to-multiple-vms/ – Or via github: https://github.com/pdellaert/vSphere-Python *

For an internal test setup i needed to be able to deploy multiple VMs from a template without to much hassle. So i started thinking that the best way to approach this, would be using a script. At first i thought i had to do this using PowerCLI as this is the preferred VMware way of scripting.

Luckily i came across the wonderful site of PySphere, which is a Python library that provides tools to access and administer a VMware vSphere setup. As Python wasn’t my strong suite, i was in a bit of a dilemma, i had almost no experience with either of those languages, so which to go for. Altho PowerCLI/Powershell has a lot more possibilities, as it is maintained and developed by VMware itself, Python had the great advantage i could do it all in a more familiar environment (Linux). It’s also closer to the languages i know than Powershell is. So i decided to just go for Python and see if it got me where i wanted to go.

This script deploys multiple VMs from a single template, you can specify how many and what basename the VMs should have. Each VM gets a name starting with the basename and a number which increments with each new VM. You are able to specify at what number it should start. You can also specify in which resource pool the VMs should be placed.

And as a final feature, you can specify a script which should be called after the VM has successfully booted and the guest OS has initiated it’s network interface. This script will be called with two arguments: the VM name and it’s IP. You can even specify it may only return a valid IPv6 address (i needed this to deploy VMs in an IPv6-only test environment).

The output if run with the help argument (-h):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 |

philippe@Draken-Korin:~/VMware/vSphere-Python$ ./multi-clone.py -h usage: multi-clone.py [-h] [-6] -b BASENAME [-c COUNT] [-n AMOUNT] [-p POST_SCRIPT] [-r RESOURCE_POOL] -s SERVER -t TEMPLATE -u USERNAME [-v] [-w MAXWAIT] Deploy a template into multiple VM's optional arguments: -h, --help show this help message and exit -6, --six Get IPv6 address for VMs instead of IPv4 -b BASENAME, --basename BASENAME Basename of the newly deployed VMs -c COUNT, --count COUNT Starting count, the name of the first VM deployed will be -, the second will be - (default=1) -n AMOUNT, --number AMOUNT Amount of VMs to deploy (default=1) -p POST_SCRIPT, --post-script POST_SCRIPT Script to be called after each VM is created and booted. Arguments passed: name ip-address -r RESOURCE_POOL, --resource-pool RESOURCE_POOL The resource pool in which the new VMs should reside -s SERVER, --server SERVER The vCenter or ESXi server to connect to -t TEMPLATE, --template TEMPLATE Template to deploy -u USERNAME, --user USERNAME The username with which to connect to the server -v, --verbose Enable verbose output -w MAXWAIT, --wait-max MAXWAIT Maximum amount of seconds to wait when gathering information (default 120) |

To run the script, the command should look something like this:

|

1 |

philippe@Draken-Korin:~/VMware/scripts$ ./multi-clone.py -s vcenter.server.domain.tld -u DOMAIN\\USER -t VM-Template -b Deployed-VM -c 1 -n 10 -r TestRP -p ./post-process.sh -6 -v -w 120 |

And finally, the script itself, you can also download it on Github:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 |

#!/usr/bin/python import sys, re, getpass, argparse, subprocess from time import sleep from pysphere import MORTypes, VIServer, VITask, VIProperty, VIMor, VIException from pysphere.vi_virtual_machine import VIVirtualMachine def print_verbose(message): if verbose: print message def find_vm(name): try: vm = con.get_vm_by_name(name) return vm except VIException: return None def find_resource_pool(name): rps = con.get_resource_pools() for mor, path in rps.iteritems(): print_verbose('Parsing RP %s' % path) if re.match('.*%s' % name,path): return mor return None def run_post_script(name,ip): print_verbose('Running post script: %s %s %s' % (post_script,name,ip)) retcode = subprocess.call([post_script,name,ip]) if retcode < 0: print 'ERROR: %s %s %s : Returned a non-zero result' % (post_script,name,ip) sys.exit(1) def find_ip(vm,ipv6=False): net_info = None waitcount = 0 while net_info is None: if waitcount > maxwait: break net_info = vm.get_property('net',False) print_verbose('Waiting 5 seconds ...') waitcount += 5 sleep(5) if net_info: for ip in net_info[0]['ip_addresses']: if ipv6 and re.match('\d{1,4}\:.*',ip) and not re.match('fe83\:.*',ip): print_verbose('IPv6 address found: %s' % ip) return ip elif not ipv6 and re.match('\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3}',ip) and ip != '127.0.0.1': print_verbose('IPv4 address found: %s' % ip) return ip print_verbose('Timeout expired: No IP address found') return None parser = argparse.ArgumentParser(description="Deploy a template into multiple VM's") parser.add_argument('-6', '--six', required=False, help='Get IPv6 address for VMs instead of IPv4', dest='ipv6', action='store_true') parser.add_argument('-b', '--basename', nargs=1, required=True, help='Basename of the newly deployed VMs', dest='basename', type=str) parser.add_argument('-c', '--count', nargs=1, required=False, help='Starting count, the name of the first VM deployed will be <basename>-<count>, the second will be <basename>-<count+1> (default=1)', dest='count', type=int, default=[1]) parser.add_argument('-n', '--number', nargs=1, required=False, help='Amount of VMs to deploy (default=1)', dest='amount', type=int, default=[1]) parser.add_argument('-p', '--post-script', nargs=1, required=False, help='Script to be called after each VM is created and booted. Arguments passed: name ip-address', dest='post_script', type=str) parser.add_argument('-r', '--resource-pool', nargs=1, required=False, help='The resource pool in which the new VMs should reside', dest='resource_pool', type=str) parser.add_argument('-s', '--server', nargs=1, required=True, help='The vCenter or ESXi server to connect to', dest='server', type=str) parser.add_argument('-t', '--template', nargs=1, required=True, help='Template to deploy', dest='template', type=str) parser.add_argument('-u', '--user', nargs=1, required=True, help='The username with which to connect to the server', dest='username', type=str) parser.add_argument('-v', '--verbose', required=False, help='Enable verbose output', dest='verbose', action='store_true') parser.add_argument('-w', '--wait-max', nargs=1, required=False, help='Maximum amount of seconds to wait when gathering information (default 120)', dest='maxwait', type=int, default=[120]) args = parser.parse_args() ipv6 = args.ipv6 amount = args.amount[0] basename = args.basename[0] count = args.count[0] post_script = None if args.post_script: post_script = args.post_script[0] resource_pool = None if args.resource_pool: resource_pool = args.resource_pool[0] server = args.server[0] template = args.template[0] username = args.username[0] verbose = args.verbose maxwait = args.maxwait[0] # Asking Users password for server password=getpass.getpass(prompt='Enter password for vCenter %s for user %s: ' % (server,username)) # Connecting to server print_verbose('Connecting to server %s with username %s' % (server,username)) con = VIServer() con.connect(server,username,password) print_verbose('Connected to server %s' % server) print_verbose('Server type: %s' % con.get_server_type()) print_verbose('API version: %s' % con.get_api_version()) # Verify the template exists print_verbose('Finding template %s' % template) template_vm = find_vm(template) if template_vm is None: print 'ERROR: %s not found' % template sys.exit(1) print_verbose('Template %s found' % template) # Verify the target Resource Pool exists print_verbose('Finding resource pool %s' % resource_pool) resource_pool_mor = find_resource_pool(resource_pool) if resource_pool_mor is None: print 'ERROR: %s not found' % resource_pool sys.exit(1) print_verbose('Resource pool %s found' % resource_pool) # List with VM name elements for post script processing vms_to_ps = [] # Looping through amount that needs to be created for a in range(1,amount+1): print_verbose('================================================================================') vm_name = '%s-%i' % (basename,count) print_verbose('Trying to clone %s to VM %s' % (template,vm_name)) if find_vm(vm_name): print 'ERROR: %s already exists' % vm_name else: clone = template_vm.clone(vm_name, True, None, resource_pool_mor, None, None, False) print_verbose('VM %s created' % vm_name) print_verbose('Booting VM %s' % vm_name) clone.power_on() if post_script: vms_to_ps.append(vm_name) count += 1 # Looping through post scripting if necessary if post_script: for name in vms_to_ps: vm = find_vm(name) if vm: ip = find_ip(vm,ipv6) if ip: run_post_script(name,ip) else: print 'ERROR: No IP found for VM %s, post processing disabled' % name else: print 'ERROR: VM %s not found, post processing disabled' % name # Disconnecting from server con.disconnect() |

The script is probably a work in progress, as there are a lot of possibilities for improvement, if you have any requests feel free to contact me!

This is the first Python script i’ve ever written, so forgive me if i made some basic mistakes against best practices. Feel free to submit a patch.

How to setup an IPv6-only network with NAT64, DNS64 and Shorewall

Goal

The goal of this article is to help people to set up a network that is IPv6 Only (except for the gateway) and does allow the users to access IPv4 servers beyond the gateway.

Overview

If you follow the news surrounding the IPv4 exhaustion, you will now that IPv4 is running out of space rapidly (currently Ripe is allocating the last /8 address space). So, it’s time to start thinking about moving to IPv6.

I have been using a 6-in-4 tunnel from Sixxs for a couple of years now, using this i have set up a dual stack network with my own /48 subnet. This setup is fun and made it possible for me to test IPv6 in real life.

I’ve been using this setup for a while now, and thou it’s an improvement on getting ready for IPv6, it still has an IPv4 network as well. The ultimate goal should be to use only IPv6 in my internal network. The downside of such a network is the fact that i would be unable to reach ‘old’ IPv4 servers which haven’t got an IPv6 address.

To solve this, i decided to configure an IPv6 only network in a test environment, using NAT64 and DNS64. DNS64 basically provides IPv6 addresses for hostnames which only return an IPv4 address, using a prefix. NAT64 accepts connections to those special IPv6 addresses and translates the connection to a IPv4 connection. It’s doing the same thing as normal NAT, translating IP addresses, just across different IP versions.

I’m using this guide as a form of documentation. I might go a bit fast through a couple of sections, but that’s mainly because i assume you are able to configure a basic Bind9 server or Shorewall setup.

It’s also important to note that i will not provide exact commands to install a package, but all of the packages should be available in most package systems (aptitude/apt, yum, …).

Initial setup

Lets start with making an overview of my setup, and what information you should need to set this up.

My gateway is a Ubuntu 12.04 LTS server which is connected to 2 networks and has a Sixxs connection using AICCU, which is configured to start at boot. In total this gives me 3 interfaces (and loopback, but we’ll disregard that).

Interfaces configurations:

- eth0: DHCP (internet)

- eth1: Static IPv6: 2001:1d3:7f2:10::1/64 (you receive a /48 from Sixxs, split it up in at least /64 subnets)

- sixxs: IPv6-in-IPv4 tunnel

List of software used. This contains only daemons specific for this guide:

- Bind9: DNS server

- Shorewall

- Shorewall6

- radvd

I’ll assume that your gateway is able to connect to the internet using DHCP on the eth0 interface, which provides you with an IP(v4) from your ISP and that your Sixxs tunnel is configured.

Bind9

Configure bind9 as you like, just make sure you have configured forwarders that can be used and recursion is enabled.

Shorewall

Make sure IP_FORWARDING=On. Here are my basic configuration files:

Zones

|

1 2 3 |

#ZONE TYPE OPTIONS IN OPTIONS OUT OPTIONS fw firewall net ipv4 |

Interfaces

|

1 2 |

#ZONE INTERFACE BROADCAST OPTIONS net eth0 detect dhcp,tcpflags,routefilter,nosmurfs,logmartians |

No need to add eth1, as it does not have a IPv4 address.

Policy

|

1 2 3 4 5 6 7 |

########################################################################### #SOURCE DEST POLICY LOG LIMIT:BURST # LEVEL net $FW DROP info $FW net ACCEPT # CATCH ALL all all REJECT info |

For security reasons i drop everything coming in from the dangerous internet…

Shorewall6

As with Shorewall, make sure IP_FORWARDING=On. The purpose of this configuration is to block all IPv6 traffic coming in from the internet, but allow clients connected to the gateway through the internal network, to access the internet through the Sixxs tunnel.

Basic configuration files:

Zones

|

1 2 3 4 |

#ZONE DISPLAY COMMENTS fw firewall net ipv6 loc ipv6 |

Interfaces

|

1 2 3 |

#ZONE INTERFACE BROADCAST OPTIONS net sixxs detect tcpflags,nosmurfs,forward=1 loc eth1 detect tcpflags,forward=1 |

Policy

|

1 2 3 4 5 6 7 8 9 10 11 |

########################################################################### #SOURCE DEST POLICY LOG LIMIT:BURST # LEVEL $FW net ACCEPT $FW loc ACCEPT loc net ACCEPT loc $FW ACCEPT net $FW DROP info net loc DROP info # CATCH ALL all all REJECT info |

Again i block everything coming in from the dangerous internet.

radvd

radvd is used to provide stateless configuration for IPv6 interfaces. After you acquired your subnet from Sixxs and have configured your tunnel, you can setup radvd to provide your clients with the information they need to access the IPv6 network.

My configuration:

|

1 2 3 4 5 6 7 8 9 10 |

interface eth0 { AdvSendAdvert on; prefix 2001:1d3:7f2:10::/64 { AdvOnLink on; AdvAutonomous on; AdvRouterAddr on; }; }; |

Configuring DNS64

Configuring DNS64 is not that hard, you just need to tell Bind that it has to return a special AAAA-record if a client from a specific range requests the IP address of a hostname that has no AAAA record. This AAAA-record is constructed by bind, using a prefix. When a client tries to connect to an IP starting with the prefix, it will be forwarded (through routing) to the NAT64 setup.

Prefix

First of, you should decide on a prefix to use. This prefix must be part of your personal /48 subnet (so it doesn’t interfere with other possible real IP addresses). You must at least commit a /96 subnet to this prefix.

As 2001:1d3:7f2::/48 is the subnet provided by Sixxs to me, i decided to use 2001:1d3:7f2:ffff::/96 as my prefix.

radvd configuration

We need to make the clients on the network aware of the IPv6 DNS server on the network, so change your /etc/radvd.conf and add the RDNSS option :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

interface eth0 { AdvSendAdvert on; prefix 2001:1d3:7f2:10::/64 { AdvOnLink on; AdvAutonomous on; AdvRouterAddr on; }; RDNSS 2001:1d3:7f2:10::1 { }; }; |

Bind configuration

Now we need to change named.conf.options to contain the following section (inside options, for instance after your forwarders):

|

1 2 3 4 5 |

dns64 2001:1d3:7f2:ffff::/96 { clients { 2001:1d3:7f2:10::/64; }; }; |

The clients option makes sure that only clients on the network connected to eth0 can use the DNS64 service.

Configuring NAT64

To configure NAT64, you have to install an extra daemon: Tayga. Tayga creates a new interface on your server which basically is an internal tunnel through which connections to your prefix network are routed and translated to IPv4 connections. This means both firewalls (Shorewall and Shorewall6) need to be aware of this interface.

Tayga configuration

You will have to make some changes in the Tayga configuration (/etc/tayga.conf), here are the settings i have changed and use:

|

1 2 3 4 |

tun-device nat64 ipv4-addr 192.168.10.1 prefix 2001:1d3:7f2:ffff::/96 dynamic-pool 192.168.10.0/24 |

Tayga needs an IPv4 IP address as it needs to communicate with the IPv4 network, it also needs an IPv6 address, but it determines that itself.

The dynamic-pool option is used to select IP addresses for the IPv6 clients. So each IPv6 client that wants to connect to an IPv4 server gets an IPv4 address linked to it in Tayga (so not on the client, only for internal NAT purposes). If you use a /24 subnet, you can basically have 254 clients connecting to IPv4 servers simultaneously. If you need more, you are allowed to use bigger subnets. Just make sure you use the ranges specified by Ripe for internal use.

Shorewall configuration

I choose to configure shorewall in a similar fashion as with the IPv4 trafic, so all traffic from internet is blocked, all traffic to the internet is allowed. You need to make Shorewall aware of the nat64 interface, as it needs to allow IPv4 traffic to go to and from it, otherwise the translation won’t work.

These are the changes i made to the Shorewall (IPv4) configurations:

Zones

|

1 2 3 4 |

#ZONE TYPE OPTIONS IN OPTIONS OUT OPTIONS fw firewall net ipv4 nat64 ipv4 |

Interfaces

|

1 2 3 |

#ZONE INTERFACE BROADCAST OPTIONS net eth0 detect dhcp,tcpflags,routefilter,nosmurfs,logmartians nat64 nat64 detect dhcp,tcpflags,routefilter,nosmurfs,logmartians,routeback |

No need to add eth1, as it does not have a IPv4 address.

Policy

|

1 2 3 4 5 6 7 8 9 10 11 |

########################################################################### #SOURCE DEST POLICY LOG LIMIT:BURST # LEVEL net $FW DROP info net nat64 DROP info $FW net ACCEPT $FW nat64 ACCEPT nat64 net ACCEPT nat64 $FW ACCEPT # CATCH ALL all all REJECT info |

Shorewall6 configuration

You need to make Shorewall6 aware of the nat64 interface, as IPv6 traffic needs to go to and from it.

These are the changes i made to the Shorewall6 (IPv6) configurations:

Zones

|

1 2 3 4 5 |

#ZONE DISPLAY COMMENTS fw firewall net ipv6 loc ipv6 nat64 ipv6 |

Interfaces

|

1 2 3 4 |

#ZONE INTERFACE BROADCAST OPTIONS net sixxs detect tcpflags,nosmurfs,forward=1 loc eth1 detect tcpflags,forward=1 nat64 nat64 detect tcpflags,forward=1 |

Policy

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

########################################################################### #SOURCE DEST POLICY LOG LIMIT:BURST # LEVEL $FW net ACCEPT $FW loc ACCEPT $FW nat64 ACCEPT loc net ACCEPT loc $FW ACCEPT loc nat64 ACCEPT nat64 net ACCEPT nat64 $FW ACCEPT nat64 loc ACCEPT net $FW DROP info net loc DROP info net nat64 DROP info # CATCH ALL all all REJECT info |

That should do it. If you restart the services (bind9, radvd, tayga, shorewall and shorewall6), your gateway is ready to provide a IPv6 only network with connectivity to internet, both IPv6 (through the Sixxs tunnel) and IPv4 (through your ISP’s connection).

Clients

You do need to prepare your clients to work on this network. This setup has been created with Linux clients in mind, but i’ll try and give an overview of what needs to be changed if you want to support Windows (7/8) and Mac OS X.

Linux clients

Linux clients need to install the RDNSSD daemon. This daemon will use the RDNSS information provided by radvd and change the /etc/resolv.conf file to include it. This way you won’t need to configure the resolv.conf yourself.

If you do not want to use the RDNSSD daemon, you will have to change your setup and use DHCPv6, which would mean you have to install the DHCPv6 daemon on the gateway and configure it, and install the DHCPv6 client on the client (which is in most cases part of the standard DHCP client of your distribution).

Mac OS X

From Mac OS X Lion on, Mac OS X is able to accept the RDNSS information from radvd. DHCPv6 is also supported. So there is no need for further configuration.

Windows

Windows Vista, 7 & 8 do not have support for RDNSS, you can provide this by installing rdnssd-win32. They do support DHCPv6, however, so it might be easier to just configure that.

Windows XP is just unable to use IPv6 without installing Dibbler. This tool provides Windows XP with DHCPv6 support.

Final thoughts

I kept my configuration simple on purpose, so if you’d like to add complex rules and policies to Shorewall(6) to protect your network, you can do so as you normally would. The only thing to remember is the flow of traffic for IPv6-Only clients to a IPv4-Only server:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

Client IPv6 -> DNS call target (IPv4-only) hostname -> GW IPv6 GW IPv6 -> DNS result (IPv6 address linked to IPv4 target server) -> Client IPv6 Client IPv6 -> packet -> GW IPv6 GW IPv6 -> routes to NAT64 -> GW NAT64 IPv6 GW NAT64 IPv6 -> NAT64 processing -> GW NAT64 IPv4 GW NAT64 IPv4 -> uses standard NAT -> GW IPv4 (external) GW IPv4 (external) -> packet -> Target IPv4 Target IPv4 -> reply -> GW IPv4 (external) GW IPv4 (external) -> Reverses NAT -> GW NAT64 IPv4 GW NAT64 IPv4 -> reverse NAT64 processing -> GW NAT64 IPv6 GW NAT64 IPv6 -> routes to GW (internal interface) -> GW IPv6 GW IPv6 -> packet -> Client IPv6 |

You need to remember that flow, restricting any traffic between (in this articles case) eth0 and nat64 and eth1 and net64 can break your nat64 setup.